Application development is new again.

The ever-changing business landscape – fueled by digital transformation initiatives indiscriminate of industry – demands businesses deliver innovative customer – and partner – facing solutions, not just tactical apps to support internal functions.

Therefore, application developers are playing an increasingly important role in achieving business goals. The financial services sector is a notable example, with companies like JPMorgan Chase spending millions on emerging fintech like online and mobile tools for opening accounts and completing transactions, real-time stock portfolio values, and electronic trading and cash management services.

But businesses are finding that creating market-differentiating applications to improve the customer experience, and subsequently customer satisfaction, requires some significant adjustments. For example, using non-relational database technologies, building another level of development expertise, and driving optimal data performance will be on their agendas.

Of course, all of this must be done with a focus on data governance – backed by data modeling – as the guiding principle for accurate, real-time analytics and business intelligence (BI).

Evolving Application Development Requirements

The development organization must identify which systems, processes and even jobs must evolve to meet demand. The factors it will consider include agile development, skills transformation and faster querying.

Rapid delivery is the rule, with products released in usable increments in sprints as part of ongoing, iterative development. Developers can move from conceptual models for defining high-level requirements to creating low-level physical data models to be incorporated directly into the application logic. This route facilitates dynamic change support to drive speedy baselining, fast-track sprint development cycles and quick application scaling. Logical modeling then follows.

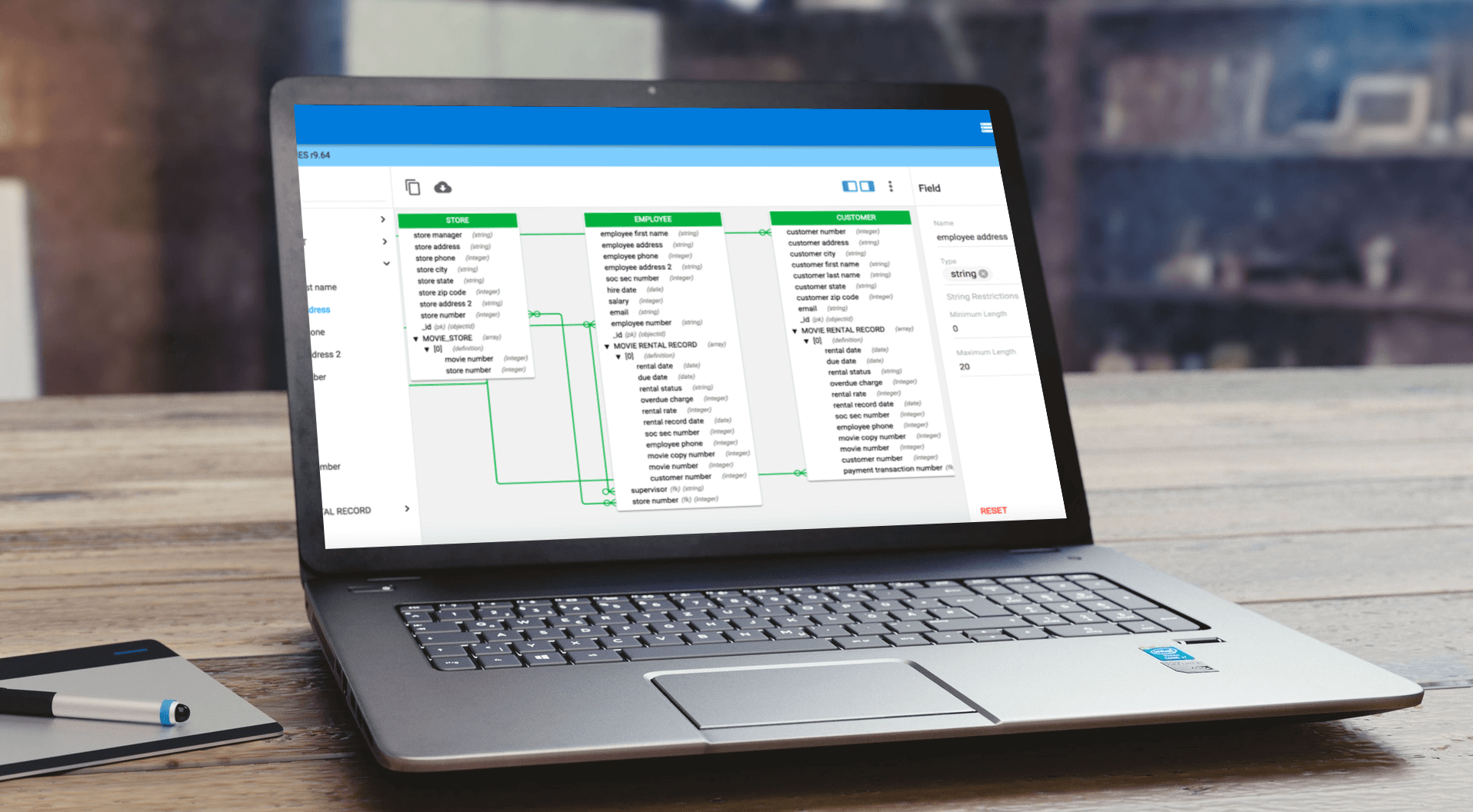

Agile application development usually goes hand in hand with using NoSQL databases, so developers can take advantage of more pliable data models. This technology has more dynamic and flexible schema design than relational databases and supports whatever data types and query options an application requires, processing efficiency, and scalability and performance suiting Big Data and new-age apps’ real-time requirements. However, NoSQL skills aren’t widespread so specific tools for modeling unstructured data in NoSQL databases can help staff used to RDBMS ramp up.

Finally, the shift to agile development and NoSQL technology as part of more complex data architectures is driving another shift. Storage-optimized models are moving to the backlines because a new format is available to support real-time app development. It is one that understands what’s being asked of the data and enables schemes to be structured to support application data access requirements for speedy responses to complex queries.

The NoSQL Paradigm

erwin DM NoSQL takes into account all the requirements for the new application development era. In addition to its modeling tools, the solution includes patent-pending Query-Optimized ModelingTM that replaces storage-optimized modeling, giving users guidance to build schemas for optimal performance for NoSQL applications.

erwin DM NoSQL also embraces an “any-squared” approach to data management, so “any data” from “anywhere” can be visualized for greater understanding. And the solution now supports the Couchbase Data Platform in addition to MongoDB. Used in conjunction with erwin DG, businesses also can be assured that agility, speed and flexibility will not take precedence over the equally important need to stringently manage data.

With all this in place, enterprises will be positioned to deliver unique, real-time and responsive apps to enhance the customer experience and support new digital-transformation opportunities. At the same time, they’ll be able to preserve and extend the work they’ve already done in terms of maintaining well-governed data assets.

For more information about how to realize value from app development in the age of digital transformation with the help of data modeling and data governance, you can download our new e-book: Application Development Is New Again.