Donna Burbank’s recent Enterprise Management 360 podcast was a hive of useful information. The Global Data Strategy CEO sat down with Data Modeling experts, and discussed the benefits of Data Modeling, and why practice is now more relevant than ever.

You can listen to the podcast on the Enterprise Management 360 website here – and below, you’ll find part 2 of 2. If you missed Part 1, find it here.

Guest Speakers:

Danny Sandwell, Product Manager at erwin (www.erwin.com)

Simon Carter, CEO at Sandhill Consultants (www.sandhill.co.uk)

Dr. Jean-Marie Fiechter, Business Owner Customer Reporting at Swisscom (www.swisscom.ch)

Hosted by: Donna Burbank, CEO at Global Data Strategy (www.globaldatastrategy.com)

Do you think that a Data Model is both a cost saver and revenue driver? Is data driving business profitability?

Simon Carter

Yes, I think it does. Data models obviously facilitate efficiency improvements, and they do that by identifying and eliminating duplication and promoting standardization. Efficiency improvements are going to bring you some cost reduction, and the reduction in operational risk through improved data quality can also deliver a competitive edge.

Opportunities for automation and rapid deployment of new technologies via a good understanding of your underlying data can make an organization agile, and reduce time-to-market for new products and services. So overall, absolutely, I can see it driving efficiency and reducing costs and delivering serious financial benefit.

Jean-Marie

For us, its mostly something that increases efficiency and in the end help us reduce costs, because it makes maintenance of the data warehouse much easier. If you have a proper data model you don’t have that chaos that happens if you just get data in and never model it correctly.

To start with its sometimes easier to just get data in and get the first reports out, but down the road when you try to maintain that chaos, it is much more costly. We like to do the thing right from the beginning; we model it, we integrate it, we avoid data redundancy that way, and it makes it much cheaper in the end.

You have a little bit longer at the beginning to do it properly, but in the long-run or medium-run you’re much more efficient and much faster. Because sometimes, you already have the data that is needed for a new report, and if you don’t have a data model, you don’t realize that you already have that data. You recreate a new interface and get the data a second, third, fourth or tenth time, and that takes a lot longer and is more costly.

So yes, it’s certainly more efficient, reduces costs, and because you have the data and can visualize what you already have, it certainly gives some more opportunity to get new business or new ideas, new analytics. That helps the business get ahead of the competition.

Danny Sandwell

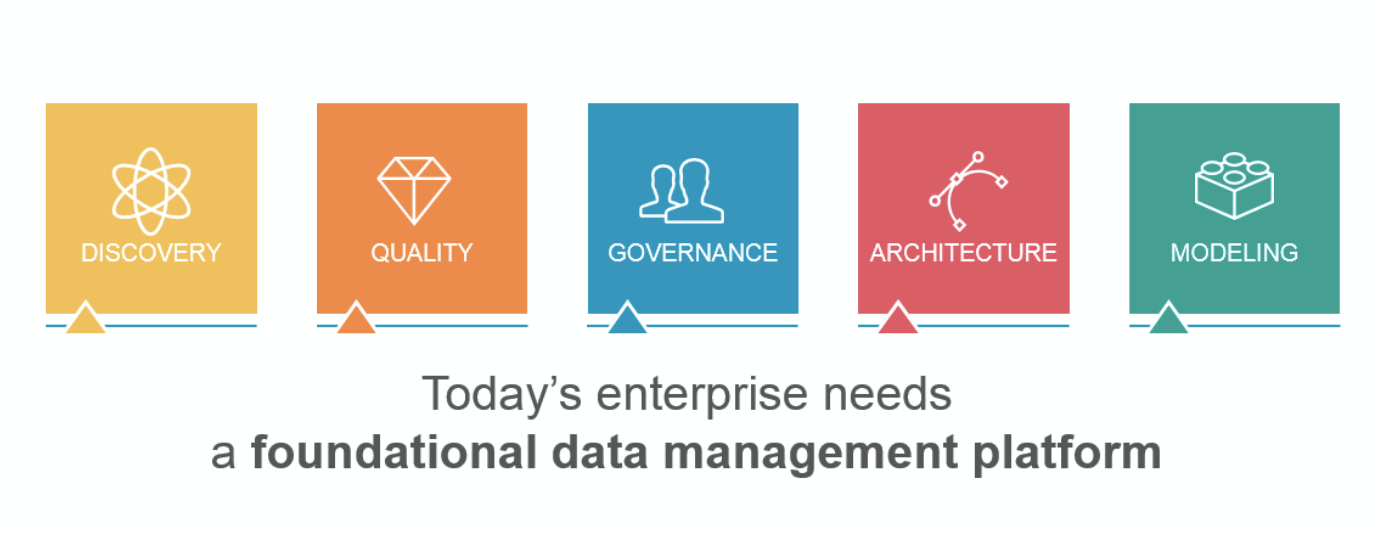

What I’m seeing is a lot surveys of organizations, whether it’s the CIO, CEO or the CDO, and talking about their approach to data management and their approach to their business from a data-driven perspective. There’s an unbelievable correlation between people that take a business-focused approach to data management, so business alignment first, technology and infrastructure second, and the growth of those companies overall.

Businesses that are a little behind the curve in terms of that alignment tend to be lower-growth companies. And the way that people are looking at data – because they’re looking at it as a proper asset, they’re not just looking at return-on-investment, they’re looking at return-on-opportunity – increases significantly when you bring that data to the business and make it easy for them to access.

So, the data model is the place where business aligns with data, so to have a business-driven data strategy requires that process of modeling it properly. And then pushing that information out. One of the benefits of data modeling, it doesn’t just support the initiative today, if you do it right and set it up right, it supports the initiative today, tomorrow and the day after that, at a much lower cost every time you iterate against that data model to bring value to a specific initiative.

By opening it up and allowing people to understand the data model in their own time and in their own terms, it increases trust in data. And when you have trust in data, you have strategic data usage. And when you have strategic data usage, all the statistics are showing that it leads to not just efficiencies and lower costs, but to new opportunities and growth in businesses.

Who in an organization typically uses Data Models?

Jean-Marie

In our organization, the users are still a majority of technical users. The people that work either in Business Intelligence, building analytics, building reports, or building ETL jobs and stuff like that. But increasingly, we also have power users that are outside of Business Intelligence that are sufficiently technical enough that they can see the use of the model.

They use the model as documentation to see what kind of data they need for a report, cockpit, BI, and how to link that data together to get something that is efficient and meaningful. So, it’s still very technical at Swisscom but it’s getting a little bit broader.

Danny Sandwell

I think for a large segment of the business world, it is still the technical or IT person. The viewing and understanding and more collaboration is on the business-side. But I think there’s also a difference in terms of the maturity of the organization and the lifecycle of the organization.

Organizations that have a large legacy and have been transitioning from a brick-and-mortar traditional business to more of a digital business, they have some challenges with legacy infrastructure, and the legacy infrastructure requires IT being involved. A lot more hands on, because it’s just that big and complex and there are a lot constraints.

You have a lot of organizations that are starting up now that have no legacy to deal with and have access to the cloud and all these self-service, off-premise type capabilities, and their infrastructure is much newer. And what I’m seeing in organizations like that, is beyond just viewing data models, they’re actually starting to build the data models.

So, your starting to see power-users or analysts on the business-side, and folks like that who are building a conceptual data model and then using that model to start going to whatever IT service they have, whether in the cloud or on-premise, to show what their requirements are and have them have those things built underneath.

So, we’re still very much in flux in terms of where an organization is, what their history is and how fast they’ve transformed in terms becoming a digital business. But I’m seeing the trend where you have more and more business people involved in the actual building at the appropriate level, and then using that as the hand-off and contract between them and all the different service providers that they might be taking advantage of.

Whether its traditional and ETL type architectures, or whether its these new analytics use-cases supported by data virtualization. At the end of the day, the business person is able to articulate their requirements and needs, and then push that down, where it used to be more of a bottom up approach.

Simon Carter

I very much follow the line that Danny was taking there, which is that most of the doing is still done by the technical team, most of the building of the models is done by the technical team. While a lot of looking at models is done by the business users, they’re also verifying things and contributing significantly to the data model.

I’ll go back to my common taxonomy. You know, business models are being used by business analysts to validate data requirements with subject matter experts, and can be the basis of data glossaries used throughout an organization.

Application models are used by solution architects who are designing and validating solutions to store and retrieve the business data, and communicate that design to developers. Implementation models are used by database designers and administrators to create and maintain the structures needed to implement design.

Increasingly though, the business-level metadata is being used to enable those business users to drive down into the actual data, and verify its lineage and quality. And that’s due to the ability to map a business term right through the various models to the data it describes.

With a lot of data-driven transformation being focused around new technologies like Cloud, Big Data and Internet of Things, is Data Modeling still relevant?

Simon Carter

I think data modeling is still incredibly relevant in this age of data technologies. Big Data is referring to data storage and retrieval technology, so the Business and Application models are unaffected. All we need is for the Implementation models to be able to properly represent any new technology requirement.

Danny Sandwell

Generally, there’s new technology that comes out and everybody thinks they are the be-all-end-all to basically re-engineer the world, and leave everything else behind. But the reality is, you end up using Big Data for the appropriate applications that traditional data doesn’t handle, but you’re not going to rip-and-replace all the infrastructure that you have underneath supporting your traditional business data.

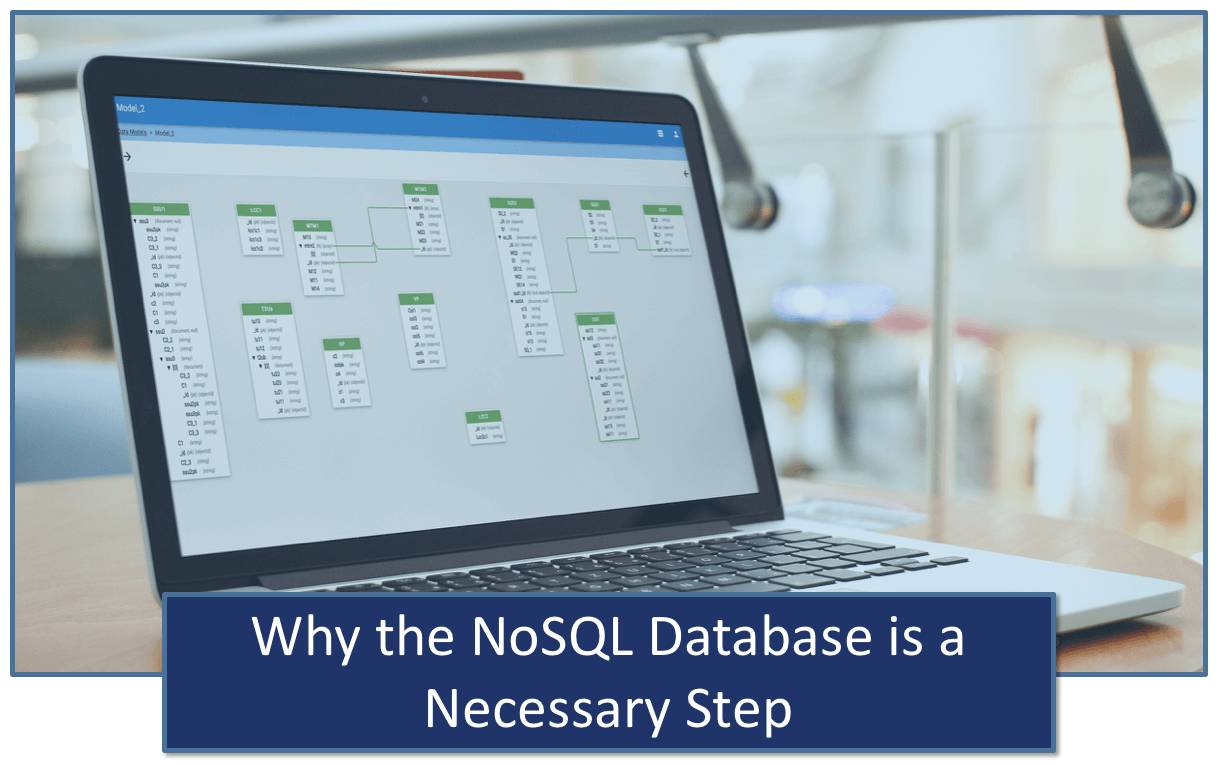

So, we end up with a hybrid data architecture. And with that hybrid data architecture, it becomes even more important to have a data model because there are some significant differences in terms of where data may physically sit in the organizations.

You know, people get this new technology and they think they don’t need any models and they know how to work with the technology. And that works when things are very encapsulated, when things are together and they’re not looking for integration. But the reality is, whatever is in Big Data probably needs to be integrated with the rest of the data.

So, what we’re seeing is at the outset, people are using the data model to document what is in those Big Data instances, because it’s a bit of a black box to the business, and the business is where the data drives value – so the business requires it. And as we see more of an impetus for proper data governance, both to manage the assets that are strategic in our organization, but also to respond to the legislative and regulatory compliance requirements that are now becoming a reality for most businesses, there is a need there.

First off, it’s a documentation tool so that people can see data no matter where it sits, in the same format, and relate to that data from a business perspective. Its building it into the architecture so you can see that its governed and managed with the same rigor that the rest of your data is, so you can establish trust in data across your organization.

Jean-Marie

I think what we see at our place, when I look at the Big Data cluster that we have, it’s a mix. If you have stuff that you do, a one-time shot at the data and then some analytics, then you’re probably using something like schema on read and you don’t really have a data model.

But as soon as you get a Big Data cluster to do analytics in a repetitive way, where you have the same questions popping up every day, every week or every month, then you certainly will have some part of your Big Data cluster that are schema on write, and then you have a data model. Because that’s your only way that you can ensure that your analytics, data mining and what not always encounter the same structure of the data.

You have some parts of the Big Data cluster that are not modeled because they are very transient. And you have some parts that are used as a source for your data warehouse or other analytics systems. Those are definitely modeled, otherwise you waste too much time every time something changes.

For more Data Modeling insight, follow us on Twitter here, and join our erwin Group on LinkedIn.