Big Data is causing complexity for many organizations, not just because of the volume of data they’re collecting, but because of the variety of data they’re collecting.

Big Data often consists of unstructured data that streams into businesses from social media networks, internet-connected sensors, and more. But the data operations at many organizations were not designed to handle this flood of unstructured data.

Dealing with the volume, velocity and variety of Big Data is causing many organizations to re-think how they store and govern their data. A perfect example is the data warehouse. The people who built and manage the data warehouse at your organization built something that made sense to them at the time. They understood what data was stored where and why, as well how it was used by business units and applications.

The era of Big Data introduced inexpensive data lakes to some organizations’ data operations, but as vast amounts of data pour into these lakes, many IT departments found themselves managing a data swamp instead.

In a perfect world, your organization would treat Big Data like any other type of data. But, alas, the world is not perfect. In reality, practicality and human nature intervene. Many new technologies, when first adopted, are separated from the rest of the infrastructure.

“New technologies are often looked at in a vacuum, and then built in a silo,” says Danny Sandwell, director of product marketing for erwin, Inc.

That leaves many organizations with parallel collections of data: one for so-called “traditional” data and one for the Big Data.

There are a few problems with this outcome. For one, silos in IT have a long history of keeping organizations from understanding what they have, where it is, why they need it, and whether it’s of any value. They also have a tendency to increase costs because they don’t share common IT resources, leading to redundant infrastructure and complexity. Finally, silos usually mean increased risk.

But there’s another reason why parallel operations for Big Data and traditional data don’t make much sense: The users simply don’t care.

At the end of the day, your users want access to the data they need to do their jobs, and whether IT considers it Big Data, little data, or medium-sized data isn’t important. What’s most important is that the data is the right data – meaning it’s accurate, relevant and can be used to support or oppose a decision.

How Data Governance Turns Big Data into Just Plain Data

According to a November 2017 survey by erwin and UBM, 21 percent of respondents cited Big Data as a driver of their data governance initiatives.

In today’s data-driven world, data governance can help your business understand what data it has, how good it is, where it is, and how it’s used. The erwin/UBM survey found that 52 percent of respondents said data is critically important to their organization and they have a formal data governance strategy in place. But almost as many respondents (46 percent) said they recognize the value of data to their organization but don’t have a formal governance strategy.

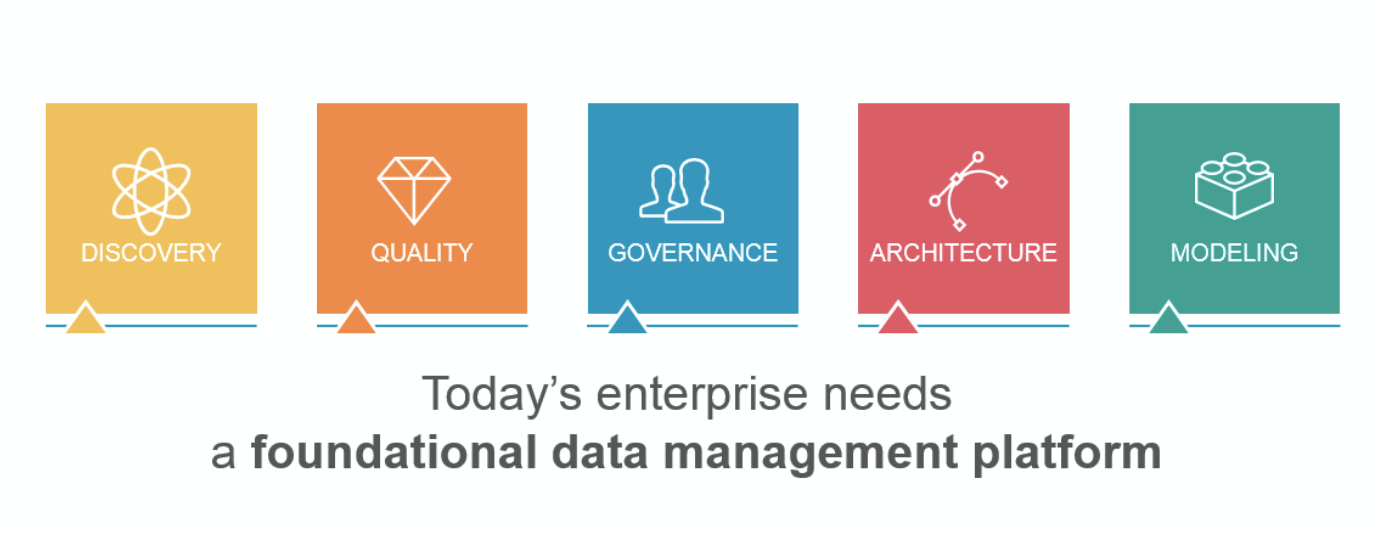

A holistic approach to data governance includes thesekey components.

- An enterprise architecture component is important because it aligns IT and the business, mapping a company’s applications and the associated technologies and data to the business functions they enable. By integrating data governance with enterprise architecture, businesses can define application capabilities and interdependencies within the context of their connection to enterprise strategy to prioritize technology investments so they align with business goals and strategies to produce the desired outcomes.

- A business process and analysis component defines how the business operates and ensures employees understand and are accountable for carrying out the processes for which they are responsible. Enterprises can clearly define, map and analyze workflows and build models to drive process improvements, as well as identify business practices susceptible to the greatest security, compliance or other risks and where controls are most needed to mitigate exposures.

- A data modeling component is the best way to design and deploy new databases with high-quality data sources and support application development. Being able to cost-effectively and efficiently discover, visualize and analyze “any data” from “anywhere” underpins large-scale data integration, master data management, Big Data and business intelligence/analytics with the ability to synthesize, standardize and store data sources from a single design, as well as reuse artifacts across projects.

When data governance is done right, and it’s woven into the structure and architecture of your business, it helps your organization accept new technologies and the new sources of data they provide as they come along. This makes it easier to see ROI and ROO from your Big Data initiatives by managing Big Data in the same manner your organization treats all of its data – by understanding its metadata, defining its relationships, and defining its quality.

Furthermore, businesses that apply sound data governance will find themselves with a template or roadmap they can use to integrate Big Data throughout their organizations.

If your business isn’t capitalizing on the Big Data it’s collecting, then it’s throwing away dollars spent on data collection, storage and analysis. Just as bad, however, is a situation where all of that data and analysis is leading to the wrong decisions and poor business outcomes because the data isn’t properly governed.

Previous posts:

- Compliance Concerns Drive Many to Data Governance

- How Data Governance Can Help Build Customer Trust, Satisfaction

- Why Data Governance is the Key to Better Decision-Making

- Data Plays Huge Role in Reputation Management

- Data Governance Helps Build a Solid Foundation for Analytics

You can determine how effective your current data governance initiative is by taking erwin’s DG RediChek.